AI is here to stay, and putting the genie back in the bottle is no longer an option. There are a lot of people who are concerned about this. What will happen with myriad job slots that these AI tools can do better, faster, and cheaper? What will happen with the internet once AI-generated data becomes so vast in numbers that it suppresses organically generated one?

The answer is - we don’t know!

Despite this, AI still has a long way to go. Self-driving cars make judgment errors that cause people to lose their lives, and these errors are sometimes based on faulty data. Data makes all the difference.

There are many reasons why this is a huge problem. One experiment tried to teach AI how to recognize a skin tumor. AI was fed with many biopsy images and images of regular moles to recognize a malignancy. The problem is that images with a tumor usually had a ruler next to them. So, what did AI draw as a conclusion? If there’s a ruler in the photo, it’s probably a tumor. Not a great start!

The thing is that AI is just as good as the information it feeds on. This is why the integrity and usability of data that go into it matter so much. Here are some of the best practices to help ensure this and slowly build more trust in AI.

AI is just recycling content

The biggest problem with the current state of AI is that it can only recycle already existing data in the content. In other words, you have a “garbage in, garbage out” scenario.

The machine learning process is great, but the result will always be disappointing if it operates on incomplete or faulty data. Feeding AI with incorrect data is not only useless but actively harmful.

The way to overcome this is to ensure that AI has access to a sufficient amount of data. If a single piece of data is faulty, then 100% of the sample is corrupt. However, if many different sources point to the same result, the likelihood of good information is higher.

There is no guarantee here, again. If you did a count of whether the Earth was circling the Sun or vice-versa in the 1300s, you would get an overwhelming result in favor of the latter. Moreover, the written data to support this claim would also be overwhelming. So, an AI operating in this period would reach the only logical conclusion - a bad one.

Now, recycling bad data creates more bad data. This will soon be an alarming matter at the pace at which AI can create data. The process has already started, which is why moderation of content and putting heavy emphasis on evaluating the source before using anything.

What about user data and cookie consent?

One of the latest stories in the world of digital marketing is the idea that AI might, in the future, completely replace the need for cookies.

The theory is that AI can process so many different patterns of user behavior that they can make effective ads even without cookies. In other words, AI doesn’t have to track users; it can just observe their behavior and get all the necessary information.

Since some people fear cookies, they might welcome this AI advertising revolution. The problem is that to get to the point where they can analyze this user data; they need to have it in huge quantities. How will they get it? Through cookies.

Moreover, while AI is getting more accessible, and it’s not impossible to imagine the future in which this software remains open-source, most enterprises still can’t rely on it. Although technology is progressing rapidly, it is just not there yet. This is why you shouldn’t ignore your cookie consent tool just yet. Chances are that it will remain useful for years to come.

When it comes to preference-based marketing, cookies are just unparalleled. Even when AI becomes much more sophisticated, it won’t be able to do much more than make an accurate guess. Information based on actual observation is always going to be more accurate.

In other words, the only thing that cookieless marketing can aspire to achieve is to eliminate cookies. This will be a huge stride forward for some people who are uncomfortable with cookies. However, regarding efficiency, it is unlikely that AI will ever overcome cookies.

End-to-end usability testing

One of AI's biggest challenges is its inability to conduct accurate end-to-end usability tests. It still relies on humans for user experience input and usability selection.

Since it can process huge volumes of data in seconds, these decisions seem instantaneous and intuitive. In reality, nothing could be further from the truth. Every decision that AI makes is data-driven.

Bad data leads to a bad decision, which, as a result, creates a scenario where users lose trust in the system as a whole. So, how do you regain this trust? Transparency is the easiest way to accomplish this.

In the introduction, we’ve mentioned an example from the medical field. A mistake is not so scary if it’s clear how it happened. It also allows you to avoid making the same mistake in the future.

Also, there’s a problem with the fact that many people believe AI to be infallible. So, the illusion shatters when the first mistake occurs, and people are left disappointed. The recent leap in AI has made people forget that this is still a technology in its early development stages. A bit more patience and understanding are necessary.

One more solution to this problem lies in open source. Being transparent regarding how it operates makes it easier for people to trust it. This gives the user a sense of control and allows everyone to move at the same pace.

Users also need to be able to report an error. You're already putting a disclaimer by adding a button to report an error. What you’re virtually saying is:

*“Yes, mistakes are possible. They are so likely that you need a button to report it when it happens to you. Because it probably will.”*

This way, you’re setting the bar a bit lower.

Different principles of trust

Different AI developers have different principles of AI. The majority of these are common-sense and are what you would expect them to be. Still, it’s worth listing them, just in case.

AI should assist human intelligence and never aim to replace it. In fact, it may even help minimize the risk of burnout.

It should be socially beneficial fair, safe, and accountable to humans.

AI should be made to see privacy protection as a high priority.

An AI should have its own set of ethical principles.

Corporate responsibility needs to be a huge factor in this field.

Of course, when it comes to the use of AI, some have greater concerns than replacing humans in the workplace. Some strongly oppose using AI to assist in autonomous weapons systems. Terminator and Skynet are some of the first associations that most people have.

The thing is that while technology is rapidly advancing, sociology needs to find a way to step up. We need to learn how to use AI safely, which is hard, seeing as most people don’t trust the system.

By making the technology more transparent and improving the moderation of data fed into the system, perhaps we’ll put some of their minds at ease. Still, philosophers and IT experts need to work hand in hand.

The human factor

Even more importantly, people need to trust AI producers. The producers themselves need to follow regulation and incorporate it into their platforms. The thing is that some of these producers operate under different jurisdictions. Compliance laws in the States and the EU are not the same. This difference can be a pretty critical one.

Lastly, policymakers need to know what they’re doing. If there’s one thing that the recent TikTok testimony before Congress has shown us, legislators often have a tenuous grasp even on simpler technological concepts. This will have to change.

The AI situation is serious and may shape humankind's future for decades and centuries to come. Sure, we can always amend bad decisions, but with so much at stake, this might not be a risk we can afford to take.

Building trust in AI is the only path toward its full integration

To fully utilize the power of AI, we need to be able to trust it. This won’t come easy.

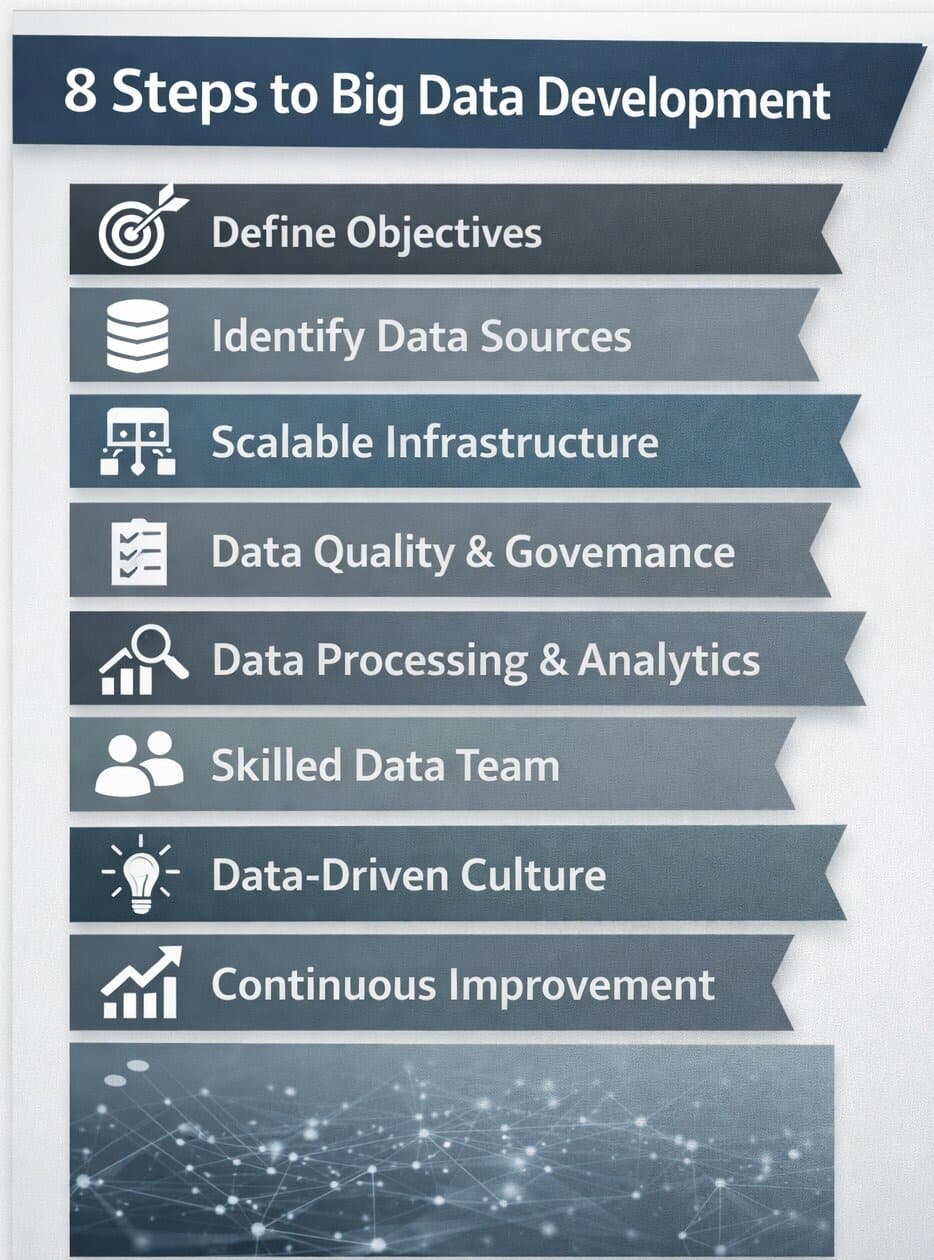

First, we must be cautious about the integrity and usability of the data we feed into the system. Your result will depend on the usability, and “garbage in” will always result in “garbage out.”

So far, the AI still can’t tell apart usable from useless with great accuracy. While this is still human-assisted, there must be no slip-ups.

Developing ethical principles for using AI and regulating it is vital to helping people trust and embrace this technology.